Building a router - planning & shopping

I have a good amount of things from Ubiquiti around the house. Five switches, a Dream Machine Pro, three access points, a Security Gateway and a CloudKey. So you might rightfully assume that I actually like the brand. And I mostly do! … except for when I do not. A week ago it was one of these days. An update for the Dream Machine basically broke all routing. If both modems - primary and secondary for failover - are connected, nothing goes in or out. The failover configuration is nowhere to be found in the configuration menu and the only way to get Internet back working was to connect the primary modem to the failover port. If the router was not not be mounted in one of the racks in the basement I would have thrown it out of the window right then and there.

Therefore it is time for the Dream Machine to go. But what else to buy? Routers are mostly hot garbage. Some of them are okay, some require you to pay for a yearly license, some just lack half of the features I want. Which means the only logical decision is to build my own… Right? RIGHT?!

Planning

I am only targeting symmetric 1Gbit routing for now, but next year there will be an opportunity to get my hands on 10Gbit, so the router should be able to deal with this speed. I will not buy hardware for 10Gbit right away, but I would like to have a plan moving forward. Currently there is one router for our private network and one for the public one. My plan is to consolidate both into one single box, but both networks should still be properly separated. It should end up being roughly the same power draw as running two dedicated routers. At the same time this means it is a single point of failure for two networks, but I am willing to live with that.

Beside routing there will be some other services running on the box. WireGuard, DHCP and DNS. I am not sure if I will put in the effort to setup individual services or simply resort to Pi-hole which would be fine too. DHCP is only needed for clients I do not care about - important systems got static IPs because I am not dealing with infinite leases which are never infinite and fail at some point - and Pi-holes ad blocking is as good as whatever I would script on top of dnsmasq and a 0-host list.

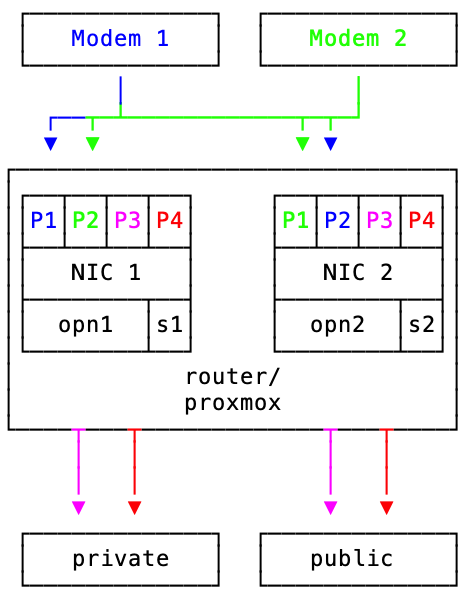

The plan for now is relatively simple. One x86 based system with two quad port network interfaces - one per network. Proxmox to virtualise two instances of OPNsense with three ports per instance. Two ports will be used for modems and one to connect the actual network. The fourth port will be mapped to a separate VM running DHCP and DNS.

Hardware-wise, several things have to be taken into consideration when building a router. Most CPUs you can buy should be able to handle 1Gbit easily, but 10Gbit will require a bit more power. If you want to use VLANs, IDP/IPS or certain other commonly supported functionalities you will likely have to disable hardware offloading, thereby putting more load onto your CPU. While there are a handful of expansion cards that bring some of the router chips features to PCs, they are still expensive and likely not worth the money for small networks and speeds up to 10Gbit, considering you can get to 25Gbit with off the shelf hardware.

Hardware Shopping

The router will be running 24/7 and likely always see some load. While achieving the least possible power draw was not the most important goal I am not trying to be wasteful, not only for monetary reasons. (If I would not care I would just get some beefy Xeon box from Dell or Lenovo and not bother with most of this.)

The CPU I chose is an AMD Ryzen 7 5700G. More than fast enough, decent idle power draw and a TDP of 65W with some room for lowering it to 45W. 8 cores and 16 threads means two cores per OPNsense instance, one for services and two for the host. The integrated GPU means no additional component taking up valuable PCIe slots and no additional power draw. I will be using the boxed cooler as I do not care about noise in my racks.

The mainboard will be an MSI MPG B550 Gaming Plus. Yay, RGB in my server, that is something I always wanted. Two PCI-E X16 slots (16 and 4x) and two PCI-E X1 means there are enough slots for all I need. Beside enough PCI-E slots the board also supports ECC memory and RAID 1 for storage devices. While using a motherboards raid controller is not always the best idea, I am okay with it in this particular case. If the hardware dies I can simply restore a backup of the VMs on a new host, which makes it less relevant to be able to recover the array.

For memory I got two sticks 16GB Mushkin ECC memory. There are only two types of systems I am willing to run without without ECC memory - gaming rigs and Apple Silicon, which as far as I know do not support ECC. This is also one of the reasons our main workstations are all x86 based and will not see an Apple Silicon upgrade soon. I am not certain if Apple figured something out to make ECC irrelevant, but I think this would have been more broadly discussed making it impossible to miss. Being able to use ECC memory on a consumer chip is amazing and was the reason I did not even consider an Intel based box.

All of this goes into a Chenbro chassis and is powered by a 400W Seasonic power supply.

Network card selection was driven heavily by the chip on the card. My general recommendation is always to buy Intel. They just work and are well supported. I ended up with two i-350 NICs.

Random Notes

Hardware shopping was a game of tradeoffs - weighing my options between power efficiency, ECC memory and general availability messed with my initial plan. The first case I selected would not have fit the mainboard, despite both being ATX. With the current CPU and chipset selection I am missing out on a few PCI-E lanes and PCI-E 4.0. Both are acceptable tradeoffs.

I might try to use Proxmoxs virtual network interfaces and see if there are any issues, but the i-350 chip is well supported by OPNsense, so if anything does not work as expected I can simply pass them directly to the VM. There have been reports about poor performance with virtualised interfaces, but hardware passthrough will also require manual configuration and makes moving VMs impossible. Intels i-350 support SR-IOV which might also be an option.

I did not list storage as I got a few Samsung SSDs in storage and will simply throw two of them in. The router will not need lots of storage nor high performance storage. As long as I got three of the same type and capacity it will be fine. I think there are 4 1TB Samsung 870 Evo somewhere in the storage box.

This will not be the last change to my network. There are still Unify switches and access points in use which now do not have a controller software and I will neither bother to host their controller nor to use their cloud offering. The hardware will be replaced.

Next Steps

As of today, part of the hardware was delivered. I am still waiting on the network cards to arrive but they should be here next week. I do not think I will get to actually building, setting up and configuring everything before Christmas. Which also means I cannot tell you all the details and where my plan failed thanks to incompatibilities, software or my own incompetency before next year - so there's that to look forward to.